INTRODUCTION

Up to 75% of anterior cruciate ligament (ACL) injuries occur without direct contact with another player (i.e., non-contact), predominantly during deceleration and changes of direction (COD) with a planted lower limb, characterized by an increased knee abduction moment (KAM).1–3 Females have a higher ACL injury rate per athletic exposure compared to their male counterparts, with injury incidence rate ratios (IRR) reported to be 1.40 to 3.00.4–7 Female basketball players have been shown to have a higher injury rate than their male counterparts, with IRRs reported to be as high as 4.14.8 Given this disproportionate incidence of ACL injury in female athletes, further investigation is warranted.

Researchers have used 3-dimensional (3D) movement analysis combined with force plate technology to identify kinetic and kinematic variables associated with ACL injuries, typically during drop landing or COD movements.9–13 This approach offers a thorough assessment of an athlete’s movement quality during sport-specific movements, with KAM as a significant predictor of ACL injury and a surrogate measure of ACL injury risk.14 However, 3D movement analysis and the use of force plates are not feasible in a clinical setting or with field-based testing.14 Two-dimensional (2D) video analysis offers a more practical solution to clinical movement assessment.

Various methods of 2D movement analysis during a COD task have been proposed across various populations. Of these assessment tools, the CMAS has had more published investigations into its validity and reliability.10,11,15–19 Utilizing a nine-item scoring rubric, the CMAS allows a practitioner to evaluate an athlete’s movement quality during a 45-90° COD maneuver, with a higher score suggesting a greater risk of ACL injury.10,11,15 Studies by Jones et al.10 and Dos’Santos et al.11 have demonstrated criterion-related validity of CMAS scores compared to peak KAM values.

The reliability of the CMAS has been evaluated in several studies. Intra-rater and inter-rater reliability have been reported between moderate to excellent for total scores with intra-class correlation coefficients (ICC) from 0.70-0.95 and 0.58-0.91, respectively.11,16–18,20 Only one study by Aparicio-Sarmiento et al.19 identified poor inter-rater reliability for total CMAS scores (ICC = 0.11-0.45). However, their findings may have resulted from insufficient training regarding the use of the CMAS. Several studies have also investigated the percentage agreement and kappa coefficient across individual CMAS items, with most items demonstrating moderate to excellent reliability.10,11,17,18

Prior investigations of the CMAS’s reliability have primarily involved “biomechanists” and strength and conditioning staff without specifying their education or professional credentials. Jones et al.18 assessed the reliability of the CMAS across a “biomechanist,” strength and conditioning coach, sprint coach, PT, and a “sports rehabilitator.” Credentials were not specified for these practitioners, but each had at least five years of experience in their profession, including cutting movement quality assessment. Good to excellent inter-rater reliability (k = 0.63-0.84) was observed across all practitioners for total scores. These findings offer promising considerations for the use of the CMAS across disciplines. However, the intra-rater and inter-rater reliability of the CMAS among PTs is yet to be investigated. PTs commonly assess movement quality in return-to-sport and injury-prevention contexts. Further investigation into the use of this tool among PTs could support its application in clinical practice and increase accessibility to injury risk screening. Consequently, further investigation into the use of the CMAS among PTs is warranted.

The level of clinical experience a PT may also influence CMAS scoring. Dos’Santos et al.11 compared CMAS scoring between three biomechanists with differing levels of experience (7 years, 17 years, and a recent graduate), identifying moderate inter-rater reliability (ICC = 0.690). The investigation did not report the raters’ academic degrees or credentials. Such an investigation using PTs as raters can provide greater insight into the utility of the CMAS across clinical experience levels, particularly with the inclusion of additional educational training and board certifications.

The primary aim of this study was to assess the reliability of the CMAS in female basketball players when utilized by PTs with varying levels of clinical experience and expertise. The investigators hypothesized that the CMAS would yield good to excellent intra-rater and inter-rater reliability when used by PTs across varied experience levels.

METHODS

Study Design

This study used a quantitative cross-sectional design to collect video recordings of subject cutting trials that were later screened using the CMAS. The study was approved by Rocky Mountain University’s (RMU) Institutional Review Board (IRB) and each participating subject’s respective collegiate IRB.

Participants

Female athletes actively participating on a U.S. National Collegiate Athletic Association (NCAA) basketball team were recruited to participate in this investigation. The inclusion and exclusion criteria are provided in Table 1.

Individuals who had experienced previous ACL injury but were medically cleared to participate in their sport by a multidisciplinary healthcare team were permitted to participate. Only one subject reported a prior ACL injury and was included in this investigation, given that trials were only compared within subjects. Convenience sampling was utilized from D2 and D3 colleges and universities until adequate power was obtained. Divisions 2 and 3 were purposefully chosen because of their accessibility to the investigators, allowing for efficient data collection. Power was calculated a priori using wnarifin.github.io, determining that a minimum of 18 subjects were needed to obtain an intra-class correlation coefficient (ICC) of 0.60.21 This ICC was chosen to confidently fall within or above ‘moderate’ reliability.22 All subjects were made aware of the potential risks and benefits of the study, that participation was voluntary, and the inclusion and exclusion criteria for participation prior to completing IRB-approved informed consent documents.

Procedures

An in-person informational session was provided to all subjects on a separate date prior to testing to review the informed consent process and provide testing instructions. Athletes were encouraged to maintain their regular diet, sleep schedule, and exercise routine for the 24 hours prior to testing and were discouraged from participating in exercise before the testing session. They were instructed to wear the basketball footwear that they would typically wear for practice and/or games, avoid loose-fitting clothing, avoid dark clothing, and tuck in their shirts. At testing, subjects completed a questionnaire using Qualtrics XM (Qualtrics, Provo, UT) using their smart devices to obtain demographic information, followed by anthropometric measurements collected by an investigator. The collection of age, height, and mass for subject descriptive demographics is consistent with previous methodological use of the CMAS.11,16,19

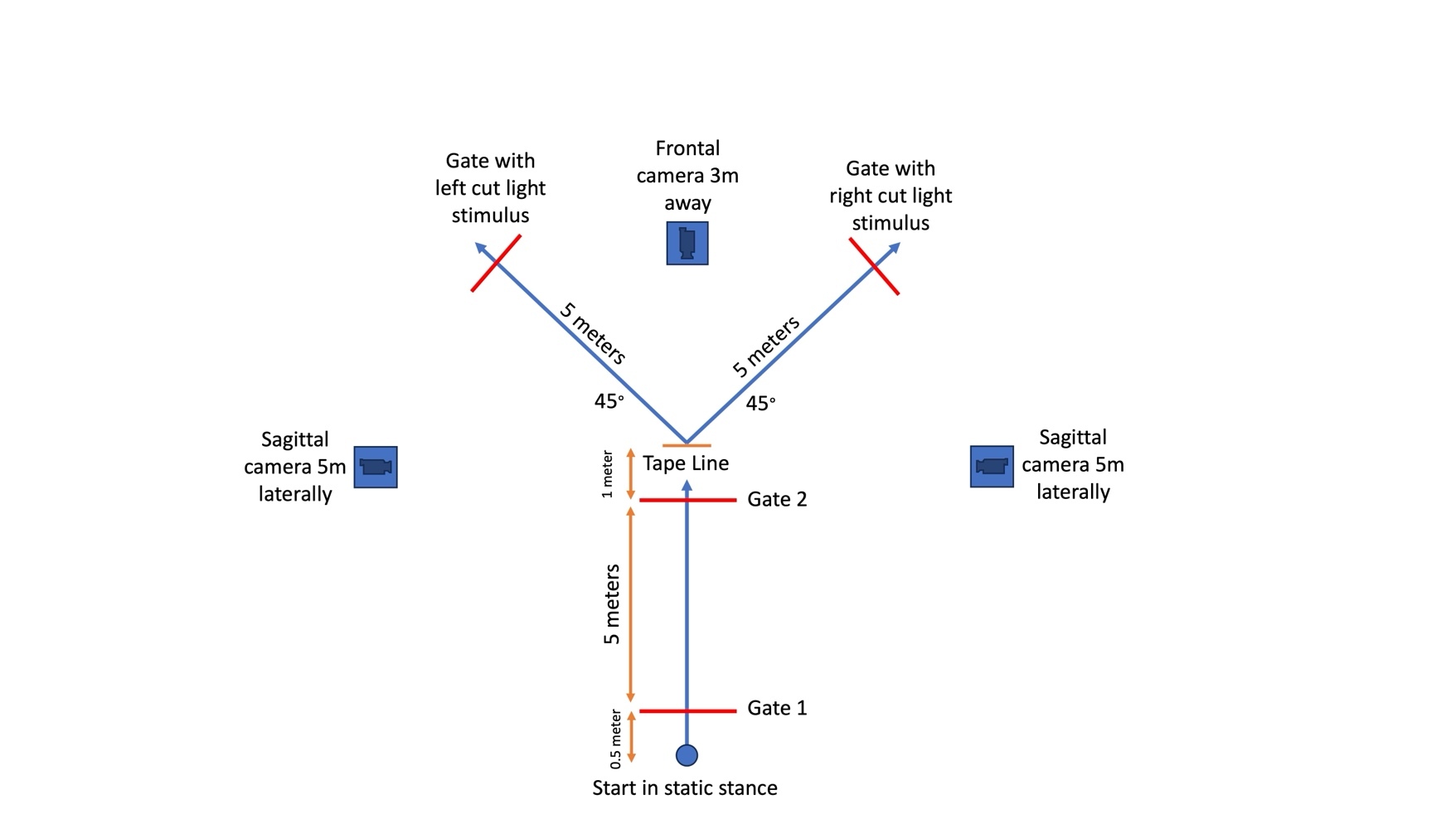

Testing was conducted on each team’s home basketball court to replicate the athlete’s natural playing environment. Subjects completed a 5–10-minute self-selected dynamic warm-up prior to testing and two practice trials in each direction and condition (planned and unplanned). The testing setup (Figure 1) paralleled what is described by Dos’Santos et al.15 and the reactive agility test (RAT) as detailed by Henry et al.23 The subjects were instructed to forward sprint 5 meters as fast as possible, change direction to the left or right after passing through the designated center gate, and perform a 45° side-step cut to the left or right to a separate gate. They were instructed to change direction prior to reaching a tape line located one meter after the center gate to promote a side-step cut maneuver at the video-recorded area rather than a change of direction occurring farther away, which would invalidate the video assessment. Participants performed four left and four right 45° side-step cuts, half of which were planned and half unplanned. Zybek Sports Powerdash Athlete Timing gates (Westminster, CO) were utilized to determine forward sprint time and total time to completion, with this information being used for research aims not included in this manuscript. A gate was located 45° to the left and another to the right to denote the cutting direction. For planned trials, the athletes were given prior knowledge of which direction they were to cut. For unplanned trials, after the athlete moved through the center gate plane at the COD point, a red light appeared at the gate that the athlete was directed to move toward. A randomized task order was used to reduce order effects or the effects of fatigue, as demonstrated by Needham and Herrington.16

Two-dimensional videos of each COD trial were recorded in the frontal and sagittal planes using three Apple iPad (Cupertino, CA) devices, all utilizing iOS 17. and recorded at 120 frames per second (fps), exceeding the minimum of 100fps recommended by Dos’Santos et al.15 The iPads were placed anterior and lateral to the COD point to record in the frontal and sagittal planes on tripods at heights of 50 inches (Figure 1). An example of the 2D recording of the COD task is shown in Figure 2.

Qualitative Assessment

Three PTs served as raters to analyze subject COD movement quality, all of whom were licensed PTs and held Doctor of Physical Therapy degrees from an accredited institution. Two PTs considered ‘experts,’ both were dual board-certified clinical specialists in Orthopaedic and Sports Physical Therapy by the American Board of Physical Therapy Specialties (ABPTS) and had primary clinical experience in an outpatient orthopaedic and sports setting. Expert 1 held eight years of clinical experience and had completed an orthopaedic residency. Expert 2 held 18 years of clinical experience, had completed a sports residency, was also Certified Strength and Conditioning Specialist (CSCS), a Fellow of the American Academy of Orthopaedic Manual Physical Therapists (FAAOMPT). A third reviewer considered the ‘novice’ was an entry-level PT with less than two years of general outpatient clinical experience and no clinical specialization through the ABPTS. While both Experts had clinical experience with movement assessment, including COD maneuvers, only Expert 1 had prior experience with the CMAS, although infrequently, and with no formal training in the test. All raters participated in a one-hour training session and a 30-minute follow-up session on the CMAS led by one of the developers of the CMAS prior to completing any video analyses on subject trials. The first session focused on reviewing the operational definitions of each item, as listed by Dos’Santos et al.15 (Appendix A), and walking through a video analysis as a group. All raters then independently completed grading for a separate practice trial and then met for the second session to discuss scoring justification and revisit the operational definitions as needed.

Stratified random sampling was utilized to select twenty subject trials across participating teams, cut directions, and planned/unplanned conditions. Each rater reviewed video recordings separately using OnForm software (Bellvue, CO). OnForm allows reviewers to alter playback speed and draw joint angles to assist in reviewing videos, all of which can be done on any smart device or computer. Both experts had experience utilizing this software, but only for running gait analysis, as where the novice had no experience. Reviewers graded the movement task using the CMAS rubric (Table 2) and were unaware of the other reviewers’ scores. There was no protocol for video review, and each rater was able to freely alter playback speed and review each video as many times as needed. Subjects receive a score of 0, 1, or 2 based on whether the reviewer identified the presence or absence of each corresponding itemized movement pattern (Table 2). Subjects then received a total score of 0-11. Planned and unplanned trials were graded together. The lead researcher (Expert 1) repeated grading on the same twenty trials in the same manner after a 130-day wash-out period to assess intra-rater reliability.

Statistical Analysis

Intra-rater reliability for Expert 1 and inter-rater reliability for all raters were determined by calculating the ICC for total CMAS scoring using Intellectus Software (Palm Harbor, FL) and DanielSoper.com (Fullerton, CA). The ICC was interpreted using the following guidelines by Koo and Li22: poor: <0.50, moderate: 0.50-0.75, good: 0.75-0.90, and excellent: >0.90. As for individual CMAS items, the percentage of agreement (agreements /agreements + disagreements × 100) and kappa coefficients (k) were calculated. Percentage agreements were interpreted consistent with Dos’Santos et al.11 using the following scale: poor: ≤50%, moderate: 51-79%, excellent: ≥80%. Kappa coefficients were calculated using the following formula: k = Pr(a) – Pr(e) / 1 – Pr(e), where Pr(a) = relative observed agreement between raters and Pr(e) = hypothetic probability of chance agreement. The kappa coefficient was interpreted according to Landis and Koch24: slight: 0.01-0.20, fair: 0.21-0.40, moderate: 0.41-0.60, good: 0.61-0.80, and excellent: 0.81-1.00.

RESULTS

A total of twenty female athletes (n=20, age: 19.45 ± 1.19 yrs; body mass 68.88 ± 13.17 kg; height: 167.95 ± 6.76 cm) actively participating on a U.S. National Collegiate Athletic Association (NCAA) basketball team were recruited to participate in this investigation, with four separate teams included in this study (Table 3, Table 4). All subjects were Tier 3 participants (Highly Trained/National Level) according to the classification framework from McKay et al.25

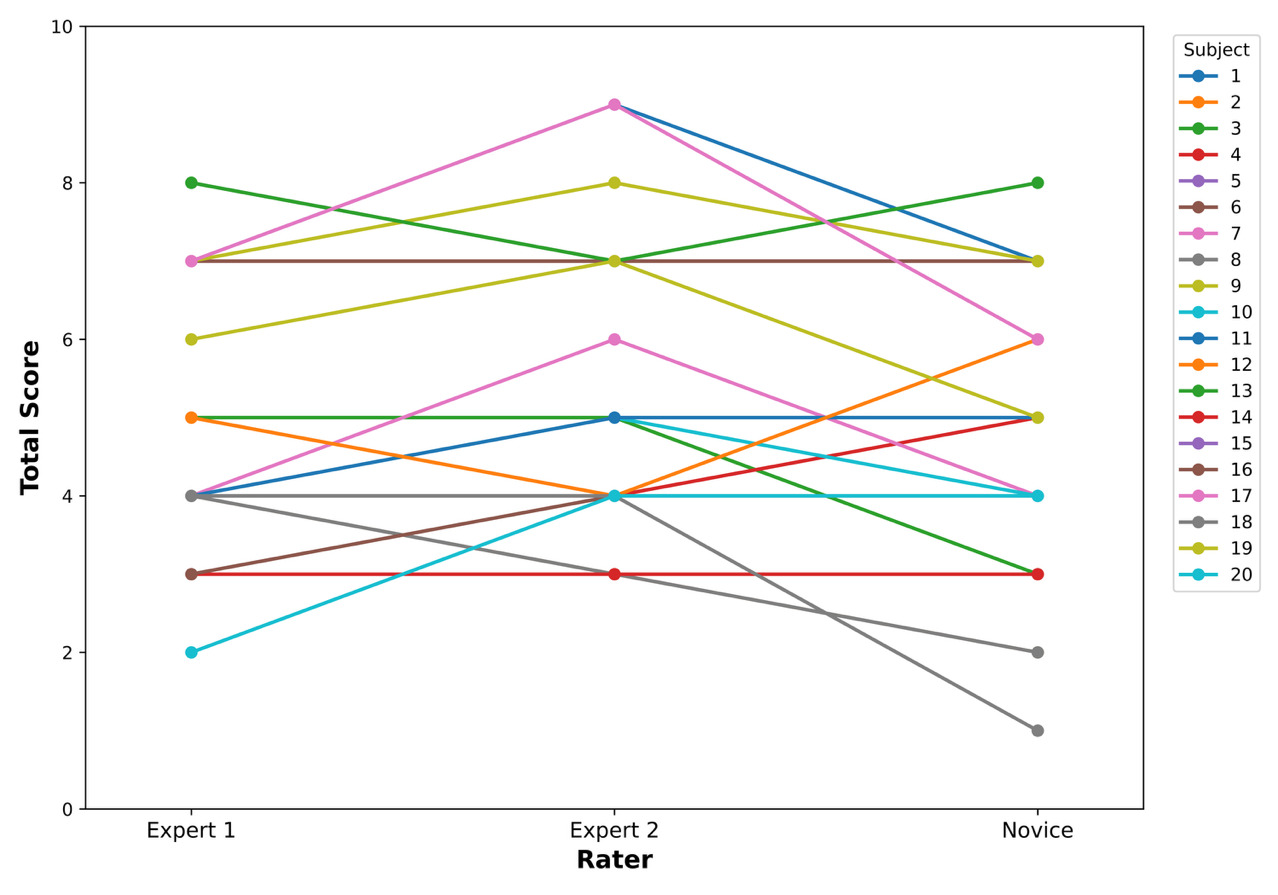

Descriptive statistics for each rater’s total scores are provided in Table 5. The total CMAS scores for each subject by individual raters are demonstrated in Figure 3. Expert 2 had the highest mean total score (5.40), whereas the novice had the lowest (4.70). Intra-rater reliability was excellent for CMAS total scores (ICC = 0.96).

For intra-rater agreement on individual CMAS items, percentage agreement was excellent (80-100%), and kappa coefficients were moderate to excellent (k = 0.59-1.00) (Table 6).

Inter-rater reliability was moderate for CMAS total scores across all raters (ICC = 0.67), good between Experts 1 and 2 (ICC = 0.84), moderate between Expert 2 and the novice rater (ICC = 0.49), and good between Expert 1 and the novice rater (ICC = 0.84). All percentage agreements and kappa coefficients between each pair of raters are presented in Table 6. Percentage agreements were highest between Expert 1 and the novice rater, ranging from moderate to excellent (85-90%). However, kappa coefficients were highest between Experts 1 and 2, ranging from moderate to good (k = 0.47-68). Expert 2 and the novice rater had the lowest average percentage agreement (60-90%) and kappa coefficients (k = 0.12-0.75), both with the widest ranges.

DISCUSSION

The primary purpose of this investigation was to determine the intra-rater and inter-rater reliability of the CMAS when utilized by PTs at different levels of clinical experience and expertise. The CMAS demonstrated excellent intra-rater reliability for Expert 1(ICC = 0.96) and moderate inter-rater reliability across all raters for total scores (ICC = 0.67). A comparison of the intra-rater agreement for individual item scoring for Expert 1 showed excellent percentage agreement (80-100%) and moderate to excellent kappa coefficients (k = 0.59-1.00). Inter-rater agreement for individual items had moderate to excellent percentage agreement across all rater comparisons (60-100%), and the majority of the kappa coefficients were moderate or better (>0.41). These findings differ from the investigator’s hypotheses that the CMAS would demonstrate good to excellent inter-rater and intra-rater reliability, but only in that inter-rater reliability was moderate. However, the results parallel previous investigations regarding the reliability of the CMAS for raters in different disciplines.10,11,16–18,20 It should be noted that this investigation of CMAS intra-rater reliability utilized a much longer washout period of 130 days compared to previous studies on the CMAS, which only used seven days.11,16 Similar findings over this longer period of time further confirm its intra-rater reliability.

The results of this study offer merit for the clinical utility of the CMAS in a rehabilitation setting, as the CMAS can serve with moderate to excellent reliability when used by PTs, even across different levels of clinical experience and expertise. A previous study by Jones et al.18 investigated inter-professional reliability across disciplines, including a PT, finding good to excellent inter-rater for total score (K = 0.63–0.84) and moderate to excellent inter-rater agreements for individual items (K = 0.5–1.0). However, this is the first investigation of the CMAS’s reliability among PTs alone. Minick et al.8 investigated the inter-rater reliability of the Functional Movement Screen™ (FMS™) across two novice raters (<1 year of experience using the FMS™) and two expert raters (developers of the FMS™ with >10 years of experience with the tool). They demonstrated excellent to substantial agreement between novice and expert raters for each item of the FMS using percentage agreement and kappa coefficients. Their investigation suggested that, as long as individuals completed the standardized training protocol, their scoring would be similar. The findings of this current investigation offer similar suggestions for the utilization of the CMAS. While this study included the ideal circumstance of formal training by one of the CMAS developers, this may not be realistic for all practitioners. Alternatively, the completion of standardized training sessions to develop consistency in using the tool by reviewing operational definitions of each item and practicing using the tool as a group may be a sufficient means of establishing an acceptable level of reliability among PTs. The utilization of operational definitions from published guidelines, such as that of Dos’Santos et al.,15 coupled with collaboration among raters would improve the reliable utilization of this tool.

PTs serve as integral members of healthcare teams, with key roles in ACL injury mitigation and return-to-sport decision-making. However, a review by Burgi et al.26 identified that return-to-sport decisions after ACLR are typically made based on a narrow number of variables, often limited to time from injury and impairment-based measures (such as knee laxity and strength). 2D movement assessment of COD is rarely assessed in clinical practice after ACLR despite findings that COD is a common movement in multidirectional sports and is a prominent mechanism of ACL injury.2,26–31 The CMAS has been deemed a valid assessment of movement characteristics associated with ACL injury risk compared to 3D movement analysis.10,11 The integration of the CMAS into PT clinical practice would bolster the comprehensiveness of primary and secondary ACL injury risk screening and mitigation in a profession that plays a critical role in ACL injury management. Particularly, assessing movement quality as part of a return to sport testing battery after ACL injury and ACLR has been recommended by several review publications.26,32–35 This study suggests that the CMAS has moderate to excellent reliability among PTs and should be considered a useful means of movement assessment in clinical practice.

While total inter-rater reliability was moderate (ICC = 0.67), it should be noted that reliability between raters did vary. A look at side-by-side rater comparisons showed that agreement was highest between Experts 1 and 2 (ICC = 0.84; excellent), as well as Expert 1 and the novice rater (ICC = 0.84; excellent), and lowest between Expert 2 and the novice rater (ICC = 0.49; moderate). Expert 2 (mean score of 5.40), Expert 1 (mean score of 5.05), and the novice rater (mean score of 4.70) held 18 years, 8 years, and one year of clinical experience, respectively. While the varied levels of clinical experience among the included raters still resulted in at least moderate inter-rater reliability, these results may suggest a potential relationship between the ability to identify movement faults and years of clinical experience. More experienced clinicians may have a higher sensitivity for detecting movement faults or hold a different perceptual bias than a novice clinician. Some individual items with the greatest discrepancies between Expert 2 and the novice clinician included Items 3, 6, and 7 – items related to femoral rotation and trunk position. These items may be less obvious to visually detect than knee valgus or foot position, and a more experienced clinician may be more attuned to detecting these movement faults.

Lastly, this investigation using the CMAS differed from previous investigations in that video recording was completed using Apple iPad devices and OnForm movement analysis software. These may be more clinically accessible to PTs than purchasing additional standalone cameras or using software such as Kinovea that is not accessible on all operating systems, as utilized in previous studies.10,11,16 Increased accessibility of equipment and software allows for more practical utilization of the CMAS in common PT clinical settings.

Limitations

There are limitations to this study that must be acknowledged. First, both planned and unplanned movement conditions were utilized in this investigation. The differences in reliability between these movement conditions were not evaluated. Movement mechanics and timed performance vary in planned versus unplanned conditions.36–40 These variations may affect rater scoring and, consequently, rater reliability. Future research should consider exploring the reliability of the CMAS by distinguishing between planned and unplanned movement conditions. Another limitation of this study is that it only included three raters. Future investigations should consider utilizing a greater number of raters and implementing reliability across separate subject testing sessions to further confirm the reliability of this tool. An additional limitation is that subjects were restricted to female collegiate basketball players without any existing or recent injuries, as outlined in the exclusion criteria. Although subjects were drawn from multiple teams across two different divisions, the applicability of the study’s findings to other populations may be limited.

Physical therapists play a significant role in post-injury return-to-sport decision-making, especially after ACLR. Future studies should examine the use of the CMAS by physical therapists in various athletic populations, particularly focused on post-injury and post-operative assessments. Particularly, the investigation of this tool in these post-injury and post-operative subjects would offer further applicability in circumstances where clinicians are most likely to apply the use of the CMAS.

CONCLUSIONS

The results of this study demonstrated that the CMAS holds excellent intra-rater and moderate inter-rater reliability for total scores when utilized by PTs at different levels of clinical experience and expertise. Percentage agreement for individual items between raters was moderate to excellent, and kappa coefficients were slight to excellent. While these findings suggest that the CMAS can be used effectively and reliably by PTs with various levels of clinical experience and expertise, there are some indications that there is a potential relationship between the ability to identify movement faults and years of clinical experience. Standardized training on the use of the CMAS to discuss operational definitions and compare scoring is advised to minimize variability in scoring. The findings of this investigation support the utilization of the CMAS into routine movement assessments to identify at-risk athletes and guide targeted movement retraining.

Corresponding Author

Evan Andreyo, PT, DPT, PhD

Department of Physical Therapy, School of Health Professions, Augustana University, Sioux Falls, SD, 57197

Email Address: evanandreyo@gmail.com

Conflict of Interest Statement

The authors declare that they have no known conflicts of interest related to the work reported in this paper.

Acknowledgements

The authors thank Dr. Jon Sherbert for participation as a rater in this investigation.